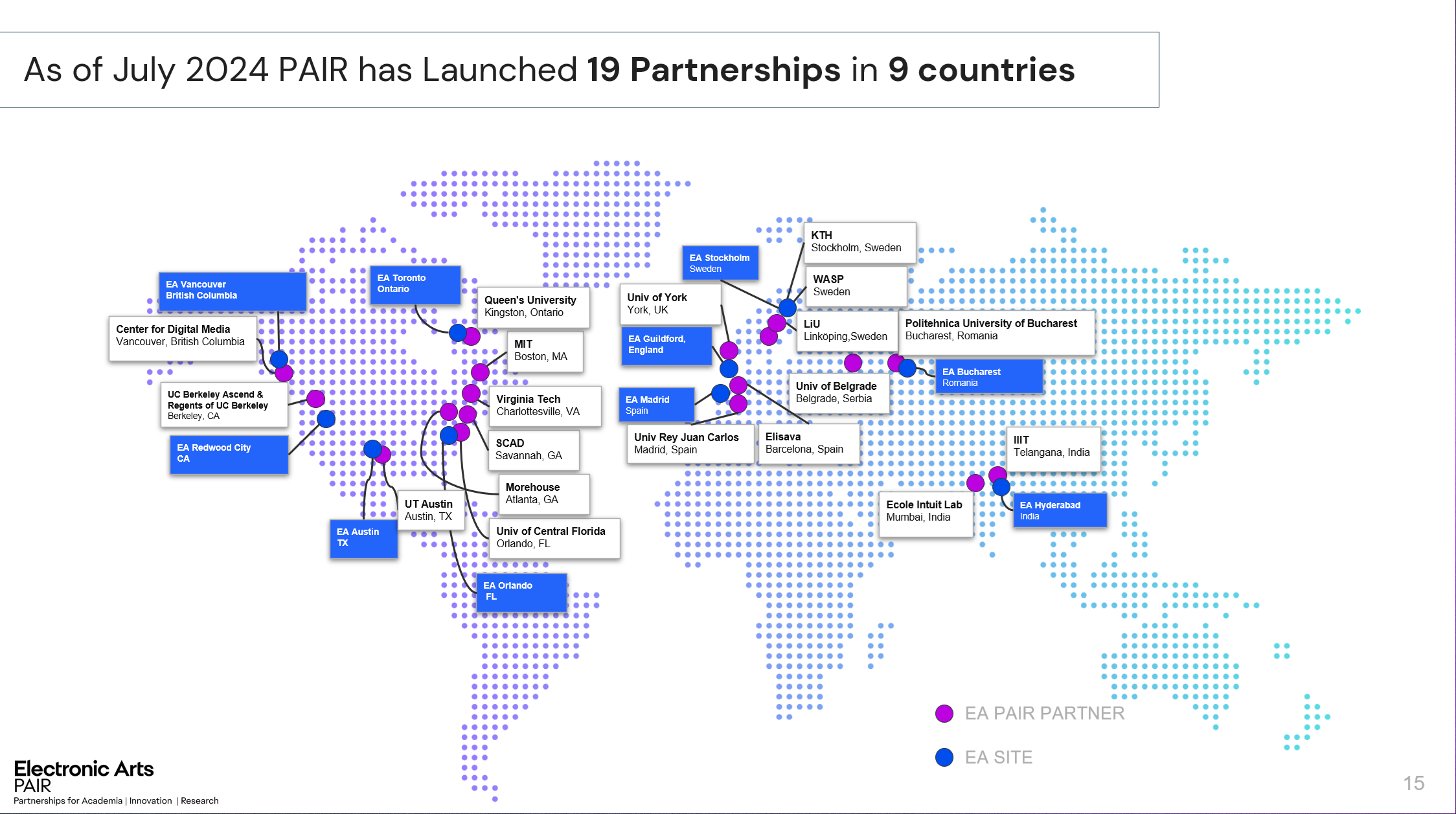

SIGGRAPH 2024: Academic Partnerships and Talent Development at EA

SEED (EA), as a Champion Sponsor of SIGGRAPH 2024, supports the incredible community of computer graphics. SIGGRAPH has always been a place where creativity and technology intersect.

I participated in the Educator’s Day Session panel, where I had the chance to share how our PAIR program at EA drives partnerships with universities and research institutions to spark inspiration, build meaningful partnerships, and create opportunities for early-career talent. AI and computer graphics continue to evolve rapidly, and I highlighted their growing role in the video game space. The importance of deeper collaboration between academia and industry was emphasized.

i3D 2021 – Keynote: Trace All The Rays! State-of-the-Art and Challenges in Game Ray Tracing

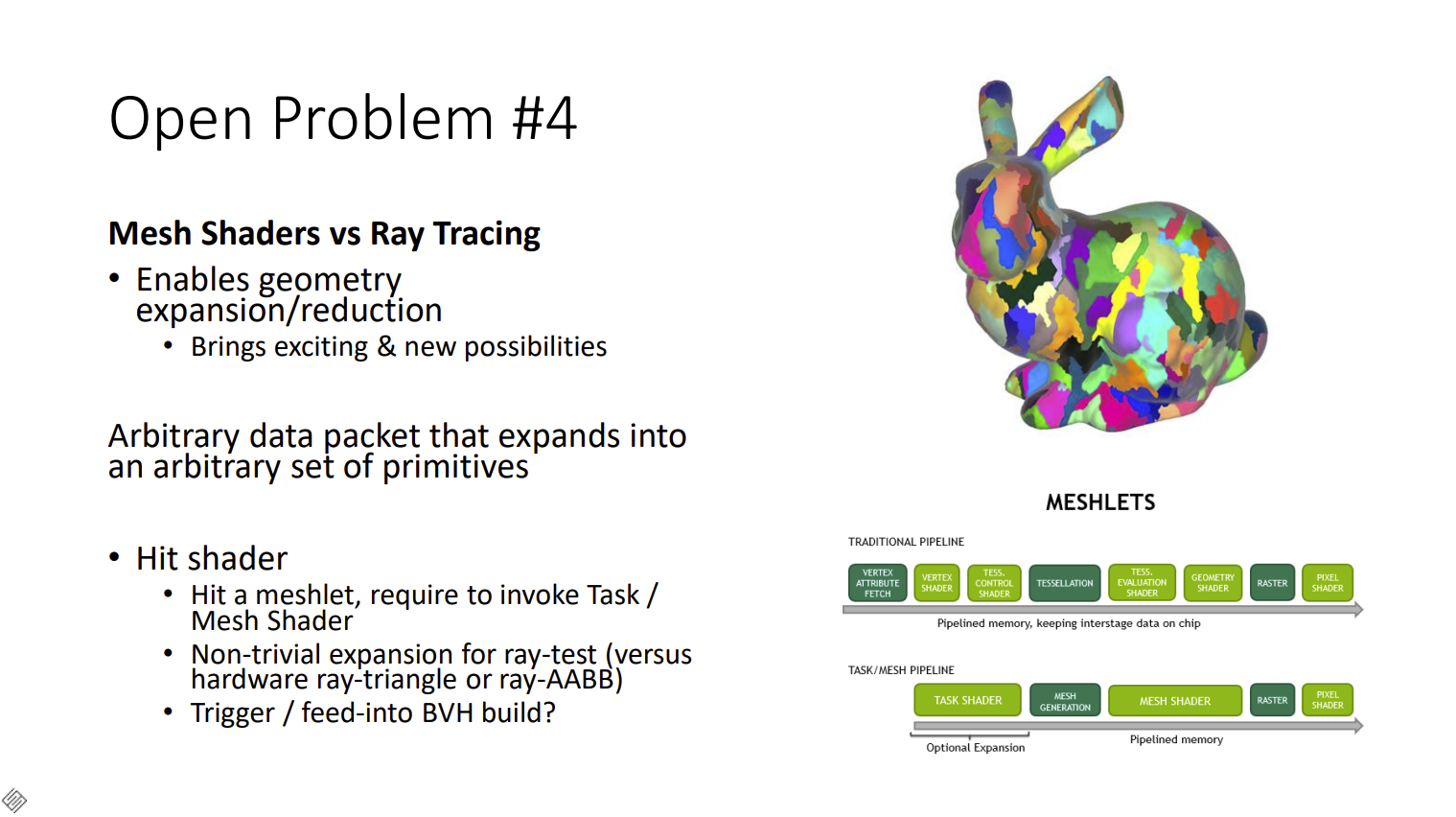

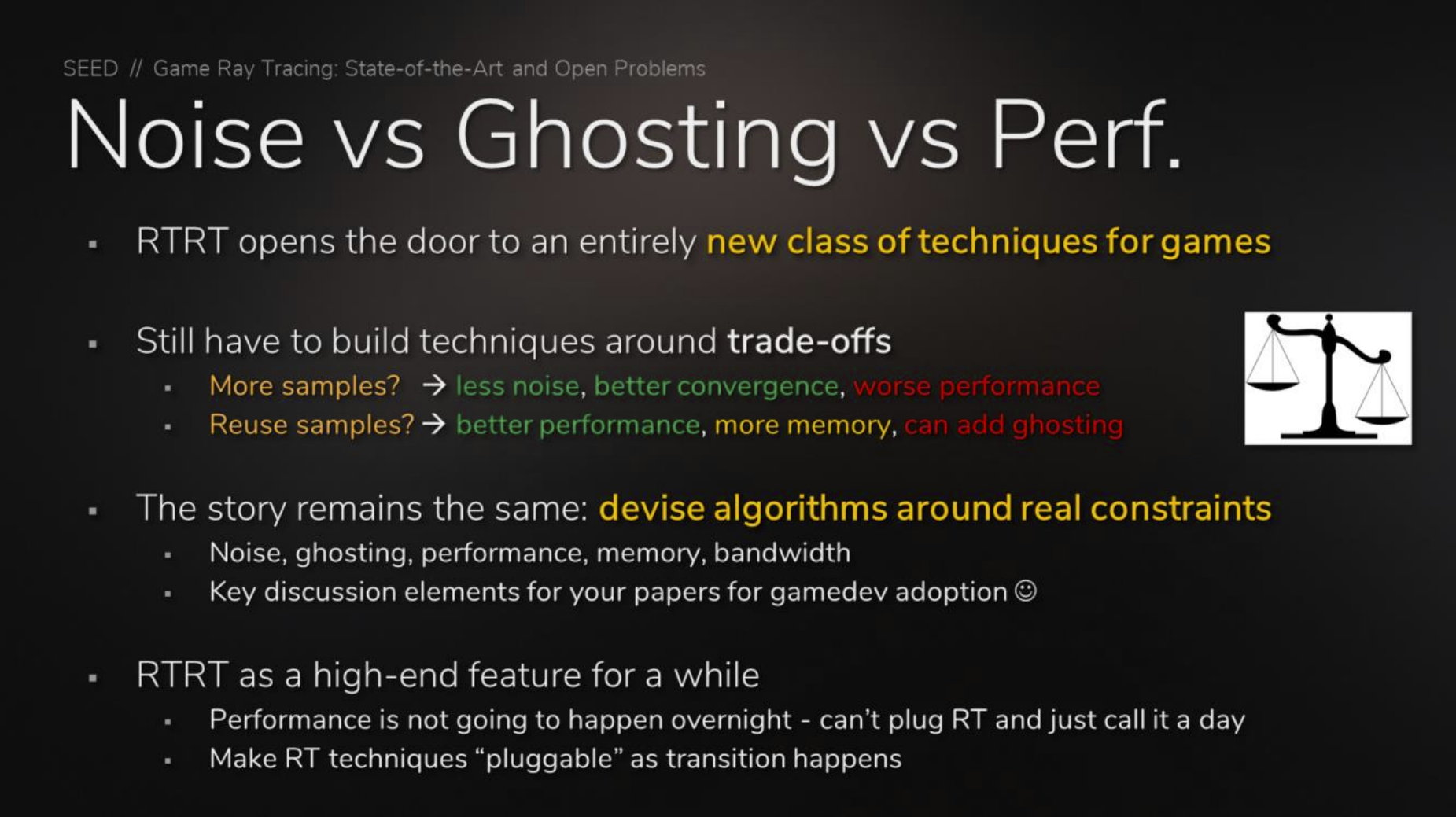

Since 2018, a paradigm shift has happened in the world of real-time graphics: real-time ray tracing. From new APIs to hardware acceleration, many techniques have made their way from the offline world in to real-time applications, such as video games and industrial visualization. In parallel, new approaches have also emerged that enable visuals that take us closer to film quality in real-time. In this talk, we will explore the current state of real-time ray tracing and the progress that has been made since its inception. From a research perspective, we will discuss some core challenges that remain as the industry strives towards achieving film quality visuals in real-time. We also discuss possible solutions to some of the remaining problems with a focus on production. Finally, we discuss industry trends for the next few years and where the research community could invest its efforts. This talk is intended to give the attendee a good understanding of how techniques and research in real-time ray tracing can progress and facilitate further adoption of the technology in videogames and real-time experiences.

SIGGRAPH 2019: Texture Level of Detail Strategies for Real-Time Ray Tracing

Presented two methods for handling texture level of detail for real-time ray tracing. Based on the work from Tomas Akenine-Möller (NVIDIA), Jim Nilsson (NVIDIA), Magnus Andersson (NVIDIA), Colin Barré-Brisebois (SEED), Robert Toth (NVIDIA), Tero Karras (NVIDIA). “Texture Level of Detail Strategies for Real-Time Ray Tracing”. Chapter 20 in Ray Tracing Gems, edited by Eric Haines and Tomas Akenine-Möller, Apress, 2019. License: Creative Commons Attribution 4.0 International License (CC-BY-NC-ND), https://raytracinggems.com

Co-Authors: Tomas Akenine-Möller (NVIDIA), Jim Nilsson (NVIDIA), Magnus Andersson (NVIDIA), Robert Toth (NVIDIA), Tero Karras (NVIDIA).

SIGGRAPH 2019 – “Are We Done With Ray Tracing?” Course: State-of-the-Art and Challenges in Game Ray Tracing

Real-time graphics has come a long way since the “brute-force approach” of rasterization had been classified “ridiculously expensive” in 1974. Henceforth the promise “Ray tracing is the future and ever will be” drove the development of ray tracing algorithms and hardware, and resulted in a major revolution of image synthesis. This course will take a look at how far out the future is, review the state of the art, and identify the current challenges for research. Not surprisingly, it looks like we are not done with ray tracing, yet.

We will present the state of the art in game real-time ray tracing, discuss techniques from shipped products, and how ray tracing APIs are used. Besides the challenges in real-time game ray tracing, parallels to offline rendering are addressed. Then, real-world game production open problems that need to be researched at scale and quality are reviewed. Finally, we dare to predict industry trends for the next 2 years and where the research community should be invested.

Presented in the .

Course Co-Authors: Alexander Keller (Host, NVIDIA), Timo Viitanen (NVIDIA), Christoph Schied (Facebook), Morgan McGuire (NVIDIA)

Slides, Course Abstract, Video Recording, ACM Library Reference

Ray Tracing Gems – Chapt 25: Hybrid Rendering for Real-Time Ray Tracing

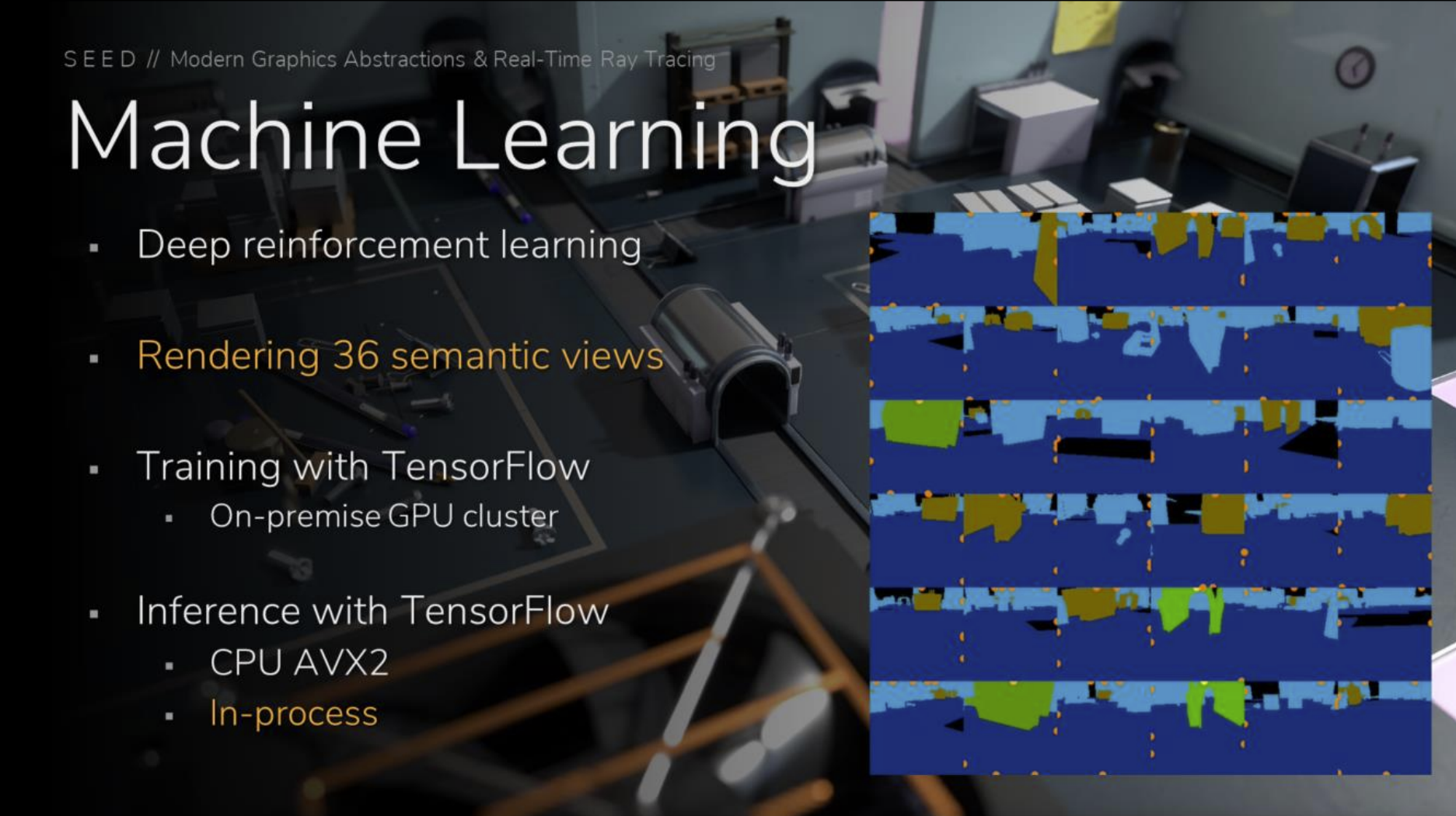

This chapter describes the rendering pipeline developed for PICA PICA, a real-time ray tracing experiment featuring self-learning agents in a procedurally assembled world. PICA PICA showcases a hybrid rendering pipeline in which rasterization, compute, and ray tracing shaders work together to enable real-time visuals approaching the quality of offline path tracing.

The design behind the various stages of such a pipeline is described, including implementation details essential to the realization of PICA PICA‘s hybrid ray tracing techniques. Advice on implementing the various ray tracing stages is provided, supplemented by pseudocode for ray traced shadows and ambient occlusion. A replacement to exponential averaging in the form of a reactive multi-scale mean estimator is also included. Even though PICA PICA‘s world is lightly textured and small, this chapter describes the necessary building blocks of a hybrid rendering pipeline that could then be specialized for any AAA game. Ultimately, this chapter provides the reader with an overall good design to augment existing physically based deferred rendering pipelines with ray tracing, in a modular fashion that is compatible across visual styles.

Co-Authors: Henrik Halén, Graham Wihlidal, Andrew Lauritzen, Jasper Bekkers, Tomasz Stachowiak, and Johan Andersson.

Paper, Source Code, Book Website

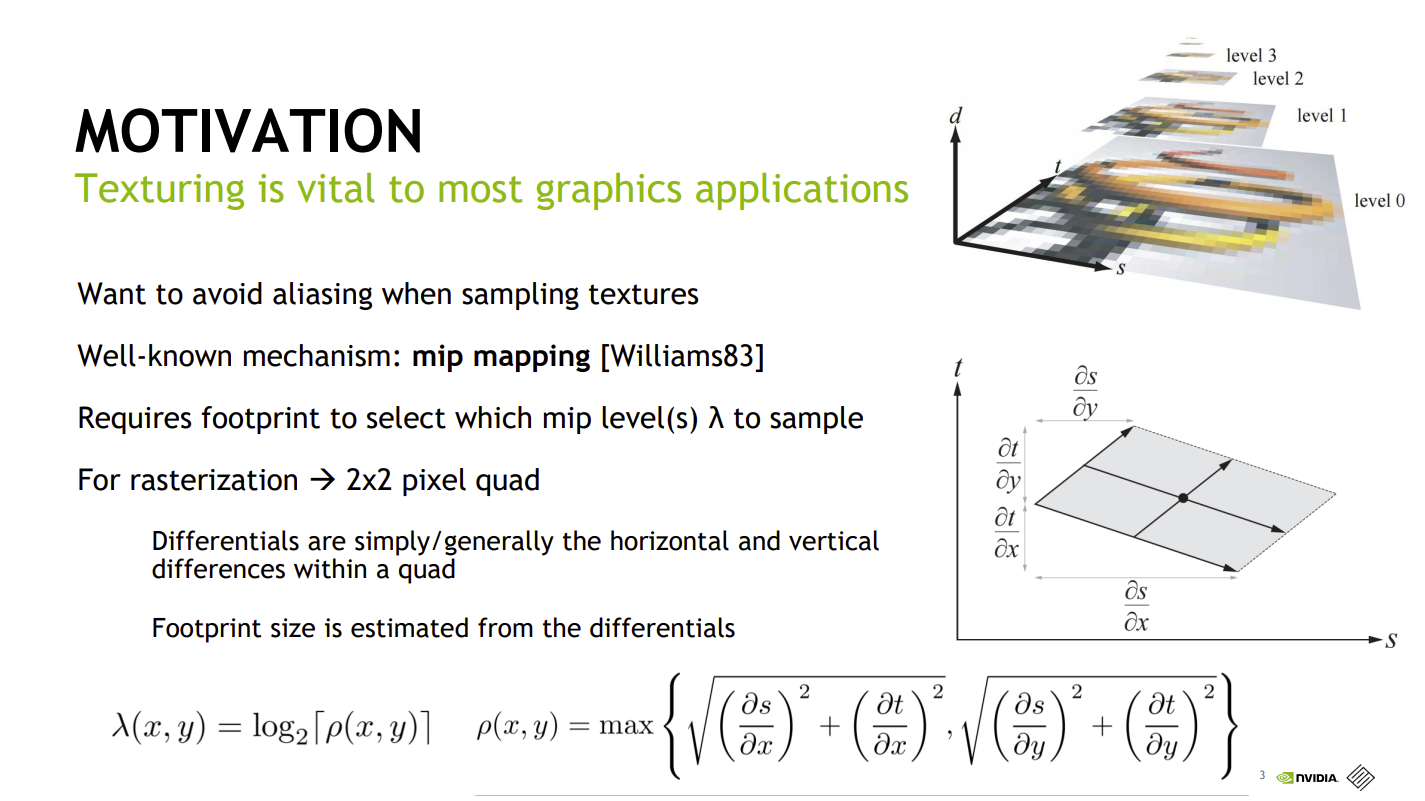

Ray Tracing Gems – Chapt 20: Texture Level of Detail Strategies for Real-Time Ray Tracing

Unlike rasterization, where one can rely on pixel quad partial derivatives, an alternative approach must be taken for filtered texturing during ray tracing. We describe two methods for computing texture level of detail for ray tracing. The first approach uses ray differentials, which is a general solution that gives high-quality results. It is rather expensive in terms of computations and ray storage, however. The second method builds on ray cone tracing and uses a single trilinear lookup, a small amount of ray storage, and fewer computations than ray differentials. We explain how ray differentials can be implemented within DirectX Raytracing (DXR) and how to combine them with a G-buffer pass for primary visibility. We present a new method to compute barycentric differentials. In addition, we give previously unpublished details about ray cones and provide a thorough comparison with bilinearly filtered mip level 0, which we consider as a base method.

SYYSGRAPH 2018 – Keynote: Modern Graphics Abstractions & Real-Time Ray Tracing

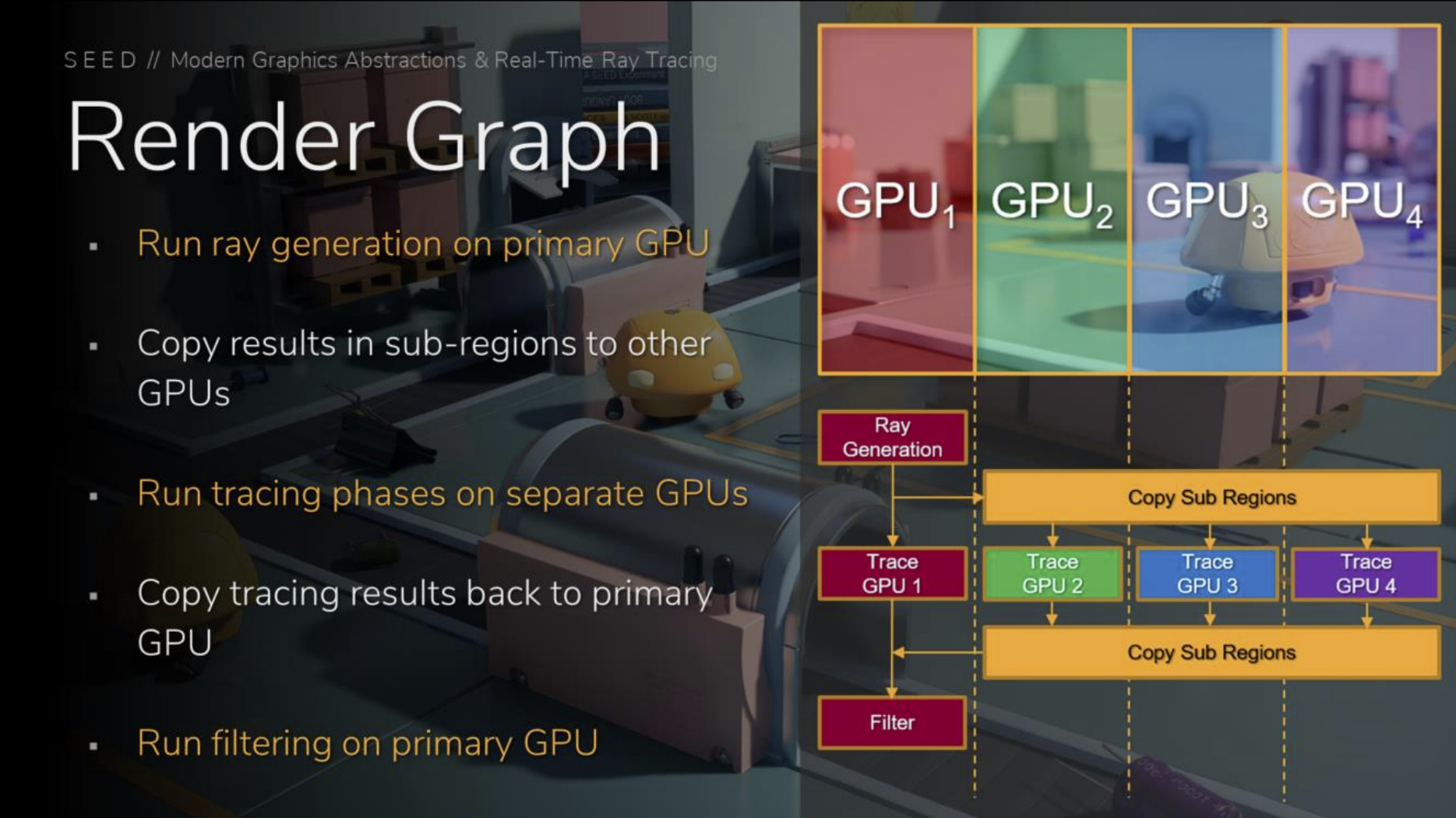

Graham Wihlidal and Colin Barré-Brisebois of SEED attended SYYSGRAPH 2018 in Helsinki and presented to the group. The first section described aspects of Halcyon’s rendering architecture, including information on explicit heterogeneous and virtual multi-GPU, render graph, and the remote render proxy backend. The second section discussed real-time ray tracing approaches and current open problems. The following day, this presentation was also given as a lecture at Aalto University.

Co-Author: Graham Wihlidal

NVIDIA Video Series 2018 – Shiny Pixels and Beyond: Real-Time Raytracing at SEED

Blog post on NVIDIA’s website showcasing our work on real-time raytracing.

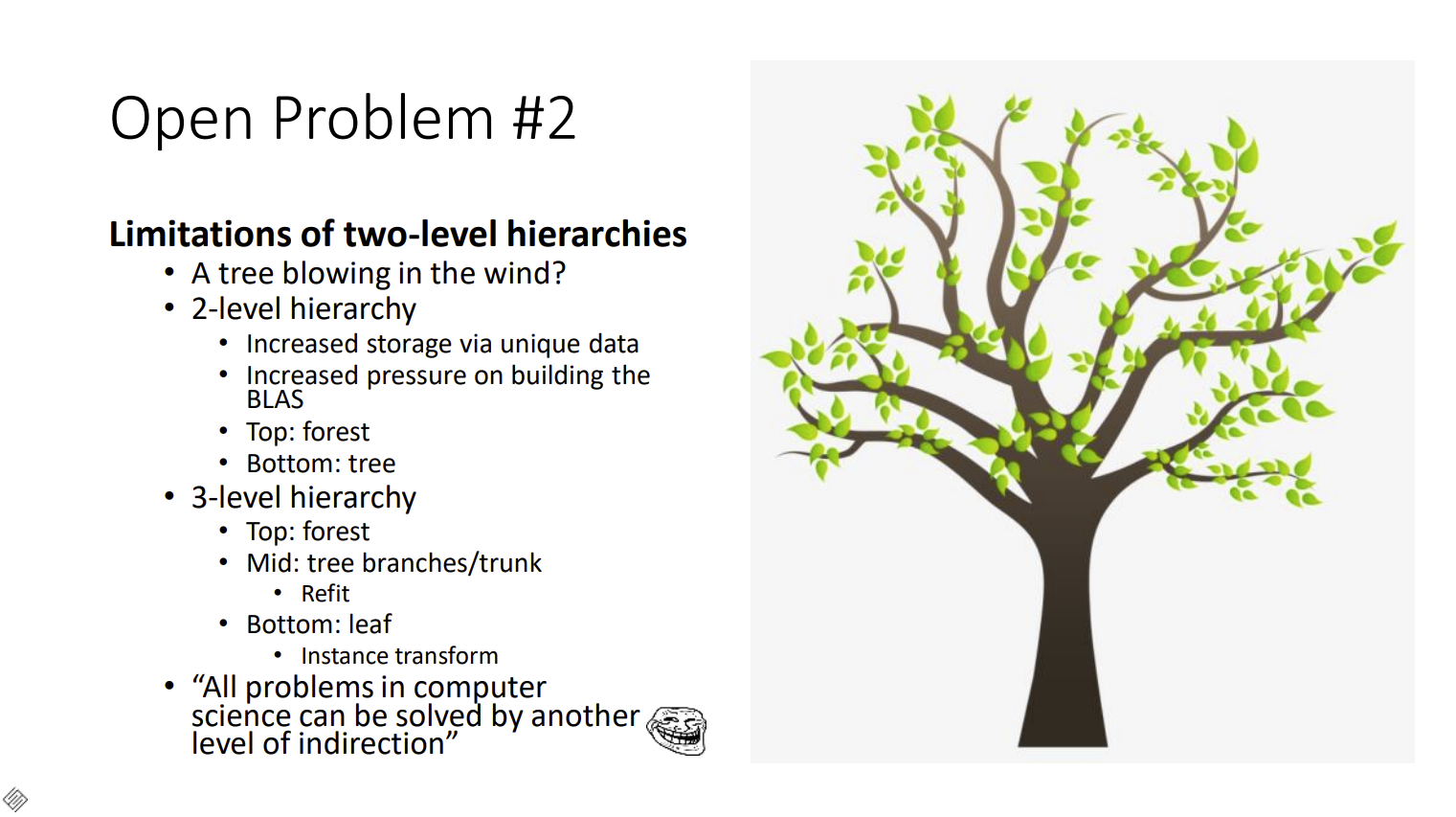

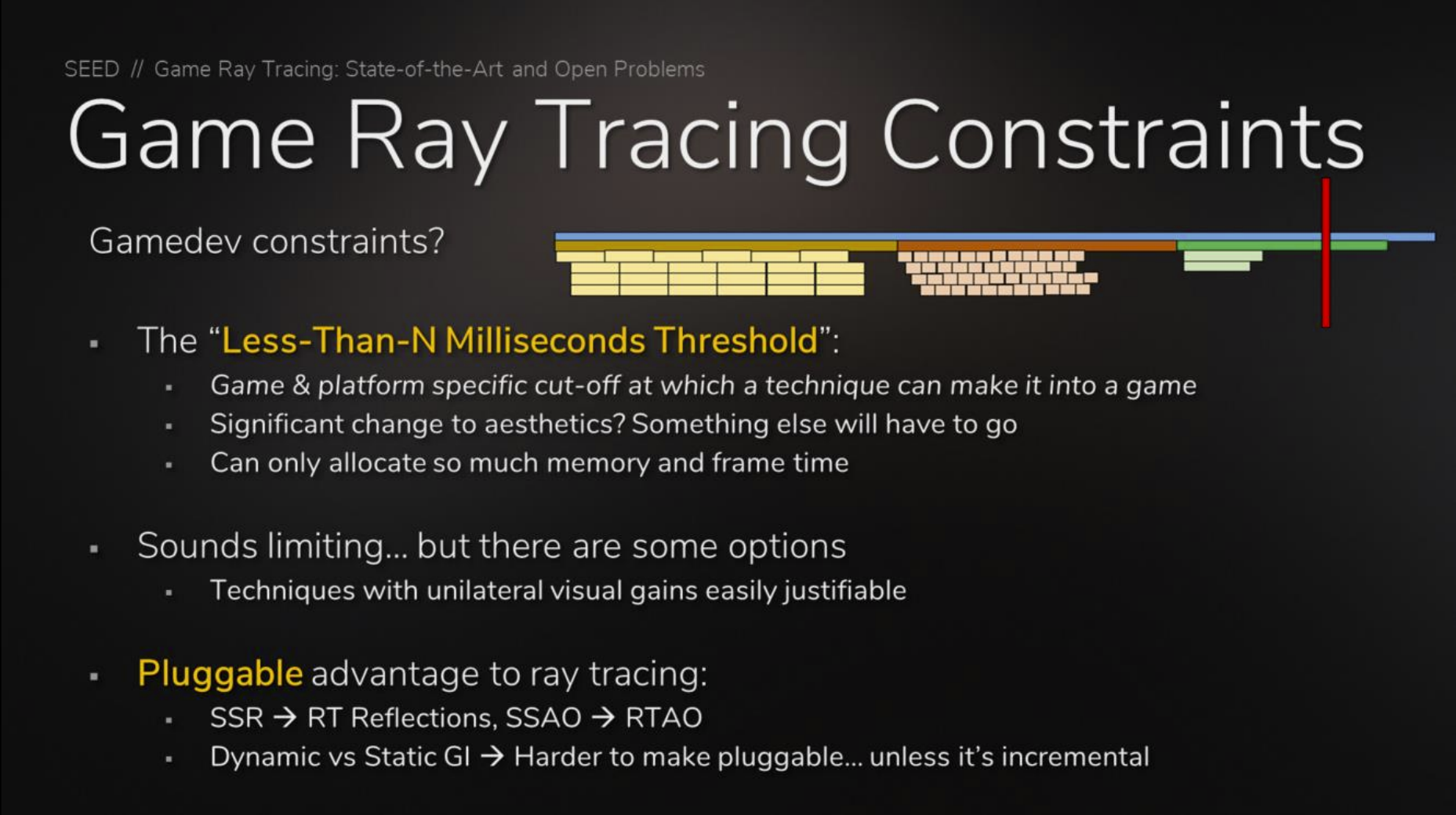

HPG 2018 – Keynote: Game Ray Tracing: State-of-the-Art and Open Problems

For this year’s keynote at High Performance Graphics 2018 (HPG), Colin Barré-Brisebois discussed the state of the art in real-time game ray tracing. He explored some of the connections between offline and real-time game ray tracing, and presented some of the open problems. Colin exposed a few potential solutions to those problems, and also proposed a call-to-arms on topics where the ray tracing research community and the games industry should unite in order to solve such open problems.

SIGGRAPH 2018 – Introduction to DirectX Raytracing Course: Full Rays Ahead! From Raster to Real-Time Raytracing

This course is an introduction to Microsoft’s DirectX Raytracing API suitable for students, faculty, rendering engineers, and industry researchers. The first half focuses on ray tracing basics and incremental, open-source shader tutorials accessible for novices. The second half covers API specifics for developers integrating ray tracing into existing raster-based applications.

Course Co-Authors: Chris Wyman (Host, NVIDIA), Shawn Hargreaves (Microsoft), Peter Shirley (NVIDIA)

Slides (PDF), Video Recording, Course Website, ACM Library Reference.

SIGGRAPH 2018 – Real-Time Ray Tracing: PICA PICA and NVIDIA Turing

In this presentation Colin Barré-Brisebois and Henrik Halén from SEED showcase some of their latest real-time ray tracing research, showcased at the annoucement of NVIDIA’s new GPU architecture Turing, featuring hardware ray tracing acceleration. This presentation showcases how the new hardware enabled SEED’s Texture Space Ray Tracing to power novel transparent shadow rendering and rough refractions in real-time.

Co-Author: Henrik Halén

GDC 2018: Microsoft DirectX Raytracing

Announcing Microsoft’s DirectX Raytracing with Microsoft.

Co-Presenters: Matt Sandy (Microsoft), Johan Andersson

GDC 2018: Shiny Pixels and Beyond – Real-Time Raytracing at SEED

In this talk, we presented results from the latest research from SEED, a cross-disciplinary team working on cutting-edge, future graphics technologies and creative experiences at Electronic Arts. We explained in detail the techniques from our research, so that attendees could understand how these results were achieved. We hope to inspire developers and provide a glimpse of the future with novel rendering techniques that could power the creative experiences of tomorrow.

Co-Presenter: Johan Andersson

SIGGRAPH 2017 – Open Problems in Real-Time Rendering Course: A Certain Slant of Light: Past, Present and Future Challenges of Global Illumination in Games

Global illumination (GI) has been an ongoing quest in games. The perpetual tug-of-war between visual quality and performance often forces developers to take the latest and greatest from academia and tailor it to push the boundaries of what has been realized in a game product. Many elements need to align for success, including image quality, performance, scalability, interactivity, ease of use, as well as game-specific and production challenges.

First we will paint a picture of the current state of global illumination in games, addressing how the state of the union compares to the latest and greatest research. We will then explore various GI challenges that game teams face from the art, engineering, pipelines and production perspective. The games industry lacks an ideal solution, so the goal here is to raise awareness by being transparent about the real problems in the field. Finally, we will talk about the future. This will be a call to arms, with the objective of uniting game developers and researchers on the same quest to evolve global illumination in games from being mostly static, or sometimes perceptually real-time, to fully real-time.

Presented in the Open Problems in Real-Time Rendering Course

Course Co-Presenters: Natasha Tatarchuk (Host, Unity), Aaron Lefohn (Host, NVIDIA), Sébastien Lagarde (Unity Technologies), Andrew Lauritzen (SEED), Marco Salvi (NVIDIA),

Slides (PDF), Slides (PPTX), Course Website. ACM Library Reference

GTC 2014: DirectX11 Rendering & NVIDIA GameWorks in Batman: Arkham Origins

An extended version from the GDC14 “Deformable Snow Rendering in Batman: Arkham Origins” talk.

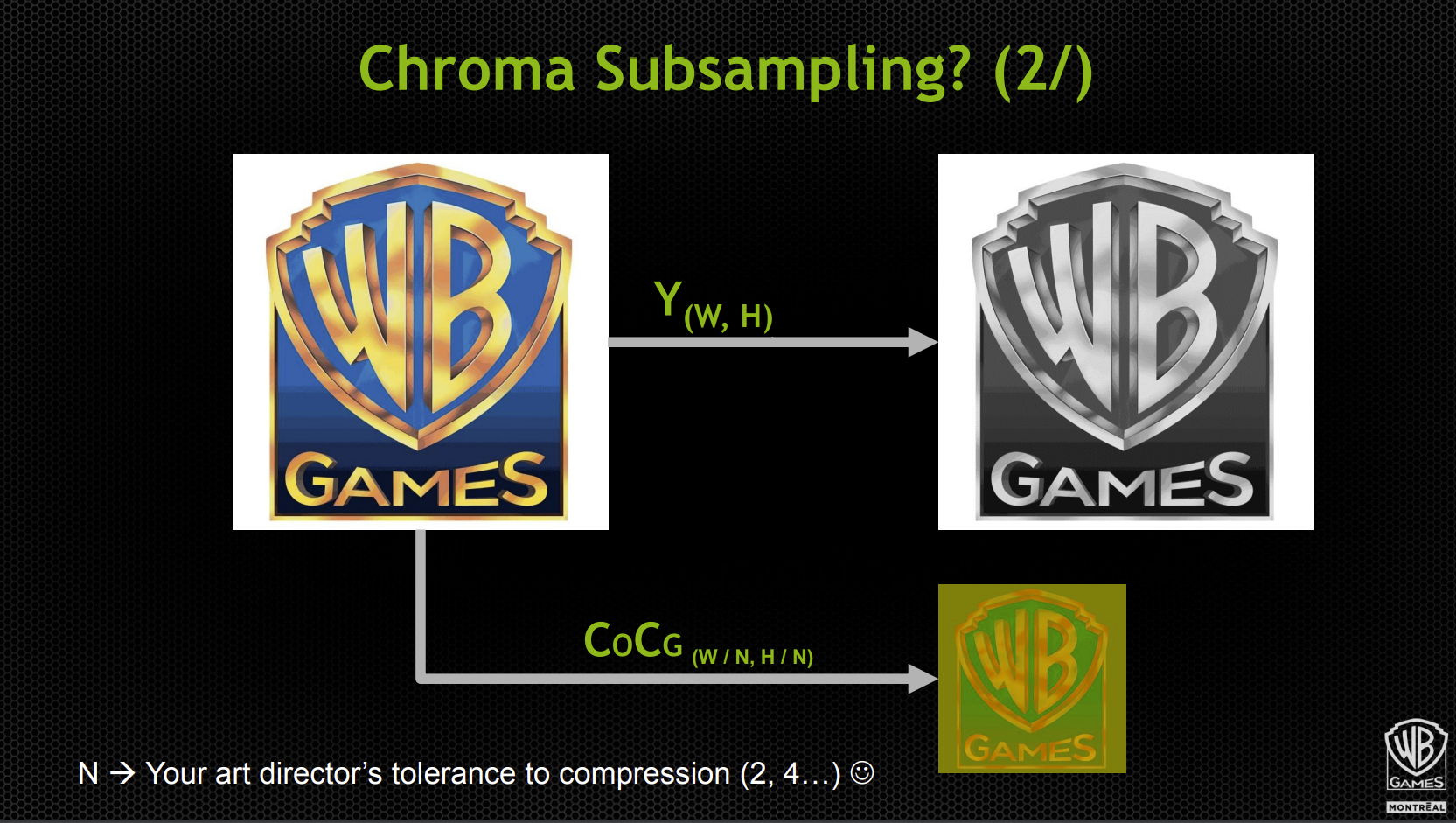

This talk focuses on several DirectX 11 features developed/integrated in collaboration with NVIDIA. Tessellation and how the integration NVIDIA GameWorks with features such as physically-based particles with PhysX, particle fields with Turbulence, HBAO+, and bokeh DOF is also presented. Other improvements are also showcased: Reoriented Normal Mapping and chroma subsampling for lightmap compression.

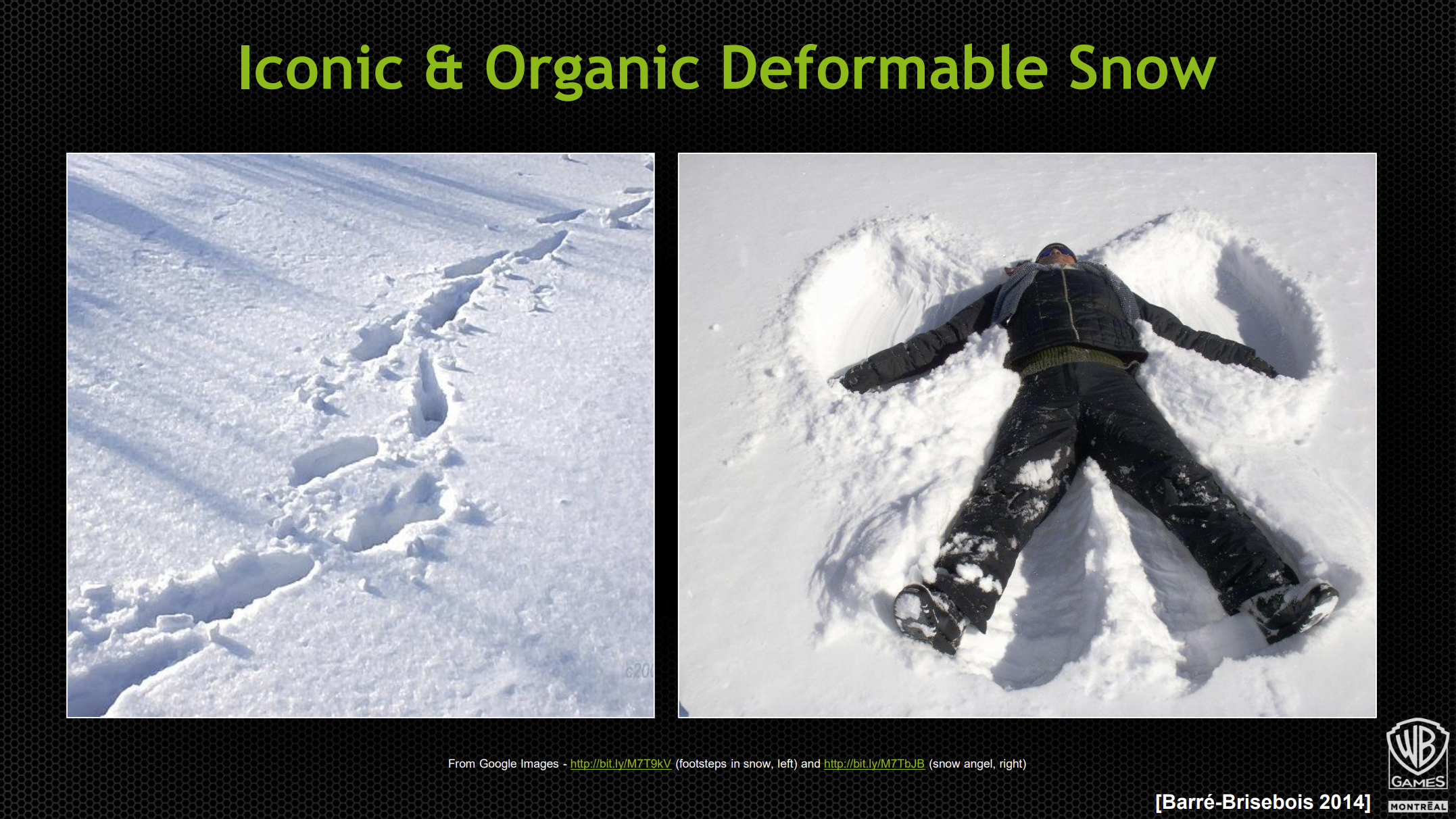

GDC 2014: Deformable Snow Rendering in Batman: Arkham Origins

This talk presents a novel technique for the rendering of surfaces covered with fallen deformable snow featured in Batman: Arkham Origins. The technique allows for visually convincing and organically interactive deformable snow surfaces everywhere characters can stand/walk/fight/fall, is extremely fast, has a low memory footprint, and can be used extensively in an open-world game.

I3D 2013 – Panel: “What Keeps You Up At Night?”

Game industry panel at the intersection of game development and applied research

Co-Panellists: Stephen Hill (Host, Ubisoft), Colt McAnlis (Google), Jim Hejl (EA Sports), Marco Salvi (Intel), Jean-Francois St-Amour (Ubisoft)

2012: Reoriented Normal Mapping

It’s a seemly simple problem: given two normal maps, how do you combine them? In particular, how do you add detail to a base normal map in a consistent way? We examined several popular methods as well as covering a new approach, Reoriented Normal Mapping, that does things a little differently. This isn’t an exhaustive survey with all the answers, but hopefully we’ll encourage you to re-examine what you’re currently doing, whether it’s at run time or in the creation process itself.

Co-Author: Stephen Hill

Article (Blog), ShaderToy (Implementation).

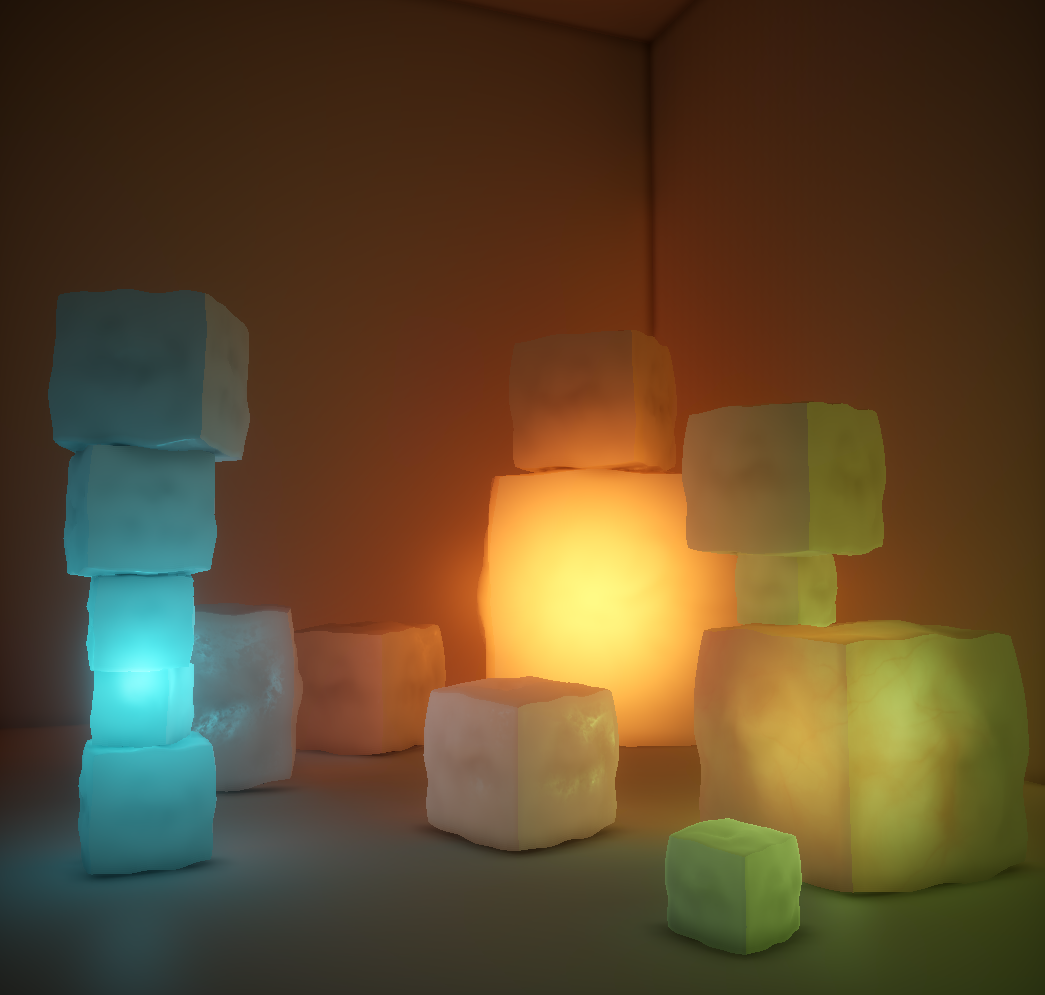

GDC 2011: Approximating Translucency for a Fast, Cheap and Convincing Subsurface Scattering Look

This talk presents a rendering technique which allows for approximating translucency for a fast, cheap, and convincing subsurface scattering look. Also describes how to approximate surface local thickness.

Co-Presenter: Marc Bouchard

SIGGRAPH 2011 – Advances in Real-time Rendering in 3D Graphics and Games Course: More Performance! Five Rendering Ideas from Battlefield 3 and Need For Speed: The Run

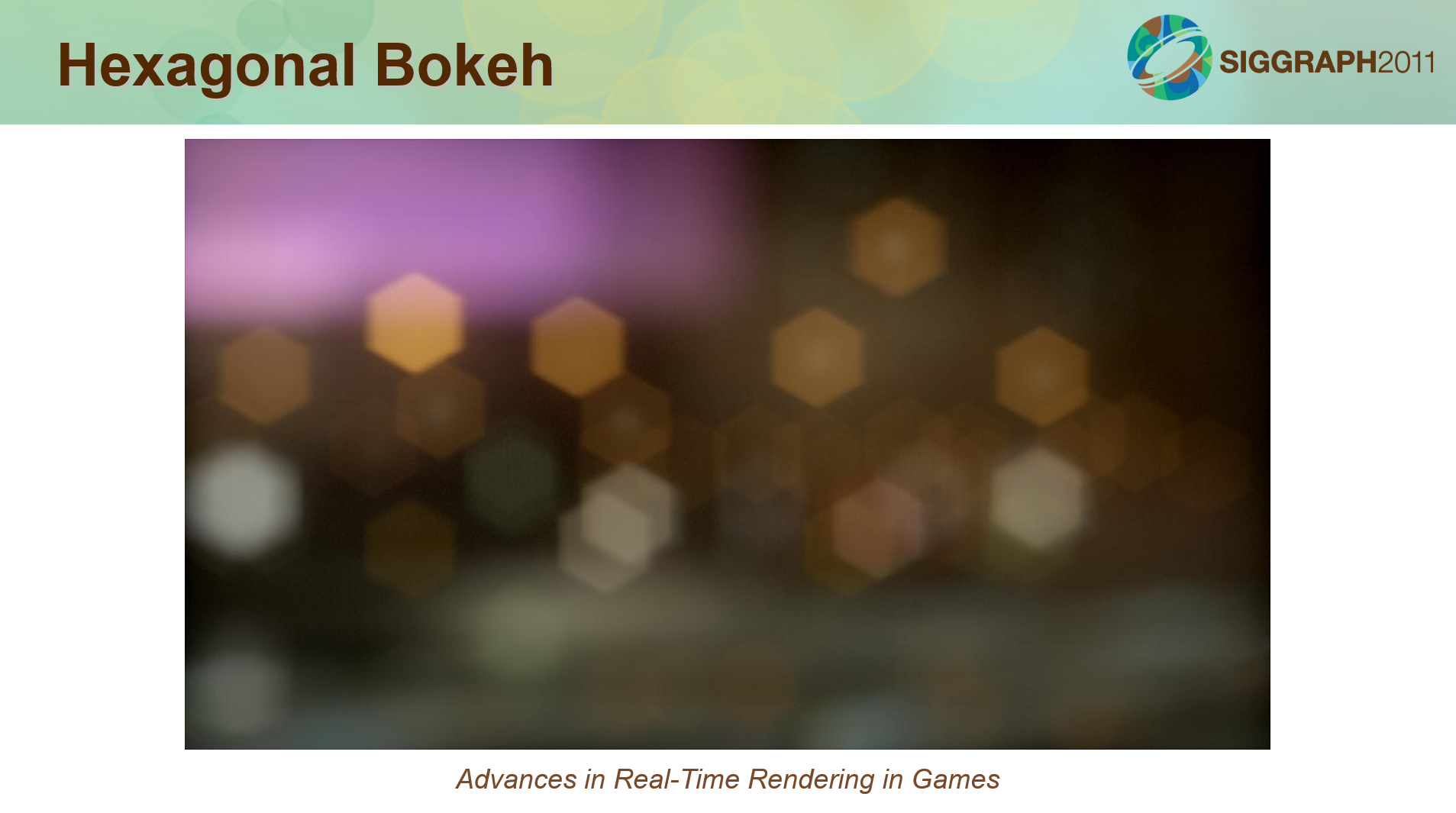

This talk covers techniques from Battlefield 3 and Need for Speed: The Run. Includes chroma sub-sampling for faster full-screen effects, a novel DirectX 9+ scatter-gather approach to bokeh rendering, HiZ reverse-reload for faster shadow, improved temporally-stable dynamic ambient occlusion, and tile-based deferred shading on Xbox 360.

Presented in the Advances in Real-time Rendering in 3D Graphics and Games course, by Natalya Tatarchuk.

Co-Presenter: John White (EA Vancouver)

Slides (PDF), Slides (PPTX), Course Website.

GPU Pro 2 – 2011: Real-Time Approximation of Light Transport in Translucent Homogenous Media

This article presents a rendering technique which allows for approximating translucency for a fast, cheap, and convincing subsurface scattering look. Also describes how to approximate surface local thickness.

Co-Author: Marc Bouchard (EA Montreal)

Buy the Book, ACM Reference Link.

XBOX 360 SDK Sample 2011: Fast Separable Grayscale Blur

XBOX 360 code sample describing how one can easily alias an 8-bit buffer as 32-bit and reduce the cost of a separable low-pass filter by a factor of (at least) four. Sample implementation by Ivan Nevraev, based on SIGGRAPH 2011 Talk “More Performance! Five Rendering Ideas From Battlefield 3 and Need For Speed: The Run”, “Temporally-stable Screen-Space Ambient Occlusion”, slides 91-93.

Co-author: Ivan Nevraev (Microsoft)

Source: Microsoft Xbox360 XDK AUGUST 2011

CGEMS 2006: Labs and Framework for 2D Content Manipulation

Creating and manipulating 2D content is important for computer scientists and requires knowledge in 2D Computer Graphics and Image Processing. A framework and five labs are proposed to help undergraduate students in Computer Science curricula to master the theory, algorithms, and data structures involved in 2D Computer Graphics and Image Processing. The labs provide a good coverage of topics, allow many alternatives, and can be easily reordered and selected to suit many types of courses. The framework has a working user interface to view and manipulate 2D content as well as adjust the parameters of the algorithms to implement. The framework also provides an architecture that hides most of the difficulties of the user interface and simplifies the implementation of the 2D content manipulation algorithms. Finally, code examples are provided to help the students in understanding how to use the framework to implement the labs.

Co-Authors: Éric Paquette (Ecole de Technologie Supérieure), Jean-François Barras (ETS), Frank Sébastien Bois (ETS), and Mohammed El Ghaouat (ETS)