Thanks to everyone who attended my GDC talk! Was quite happy to see all those faces I hadn’t seen in a while, as well as meet those whom I only had contact with via Twitter, IM or e-mail.

For those who contacted me post-GDC, it seems the content I submitted for GPU Pro 2 didn’t make it into the final samples archive. I must’ve submitted too late, or it didn’t make it to the editor. Either way, the code in the paper is the most up-to-date, so you should definitely check-it out (and/or simply buy the book)!

Roger Cordes sent the following questions. I want to share the answers, since it covers most of the questions people had after the talk:

1. An “inward-facing” occlusion value is used as the local thickness of the object, a key component of this technique. You mention that you render a normal-inverted and color-inverted ambient occlusion texture to obtain this value. Can you give any further detail on how you render an ambient occlusion value with an inverted normal? I am attempting to use an off-the-shelf tool (xNormal) to render ambient occlusion from geometry that has had its normals inverted, and I don’t believe my results are consistent with the Hebe example you include in your article. A related question: is there a difference between rendering the “inward-facing” ambient occlusion versus rendering the normal (outward-facing) ambient occlusion and then inverting the result?

Yes, it is quite different actually. 🙂

If we take the cube (from the talk) as an example, when rendering the ambient occlusion with the original normals, you basically end up with white for all sides, since there’s nothing really occluding each face (or, approximately, the hemisphere of each face, oriented by the normal of that face). Now, in the case you flip the surface normals, you will end up with a different value at each vertex, because the inner faces that meet at each vertex create occlusion between each other. Basically, by flipping the normals during the AO computation, you are averaging “occlusion” inside the object. Now, the cube is not the best example for this, because it’s pretty uniform, and the demo with the cubes at GDC didn’t really have a map on each object.

In the case of Hebe, it’s quite different:

You can see on the image above how the nose is “whiter” than the other “thicker” parts of the head. And this is the key behind the technique: this map represents local variation of thickness on an object. If we transpose the previous statement for the head example, this basically means that the polygons inside the head generate occlusion between each other: the closer they are to each other, the more occlusion we get. If we get a lot of occlusion, we know the faces are close to each other, so this means that this area of the object is generally “thin”.

Inverting the AO computation doesn’t give the same result, because we’re not comparing the same thing, since the surface is “flipped” and faces are now oriented towards the inside of the mesh.

If we compare this with regular AO, it’s really not the same thing, and we can clearly see why:

Even color-inverted, you can see that the results are not the same. The occlusion on the original mesh happens with it outside hull, where as the normal-inversion gets occlusion from the inside hull, which are totally different results (and, in a way, totally different shapes).

2. In the GDC talk, some examples were shown of the results from using a full RGB color in the local thickness map (e.g. for human skin). The full-color local thickness map itself was not shown, though. I’m curious how these assets are authored, and wonder if you have any examples you might share?

For this demo, we basically just generated the inverted AO map, and multiplied it by a nice uniform and saturated red (the same kind of red you can see when you put your finger in front of a light). It can be more complex, and artists can also paint the various colors they want in order to get better results (i.e. in this case, some shades of orange and red, rather than a uniform color). Nonetheless, we felt it was good enough for this example. With proper tweaking, I’m sure you can make it even look better! 🙂

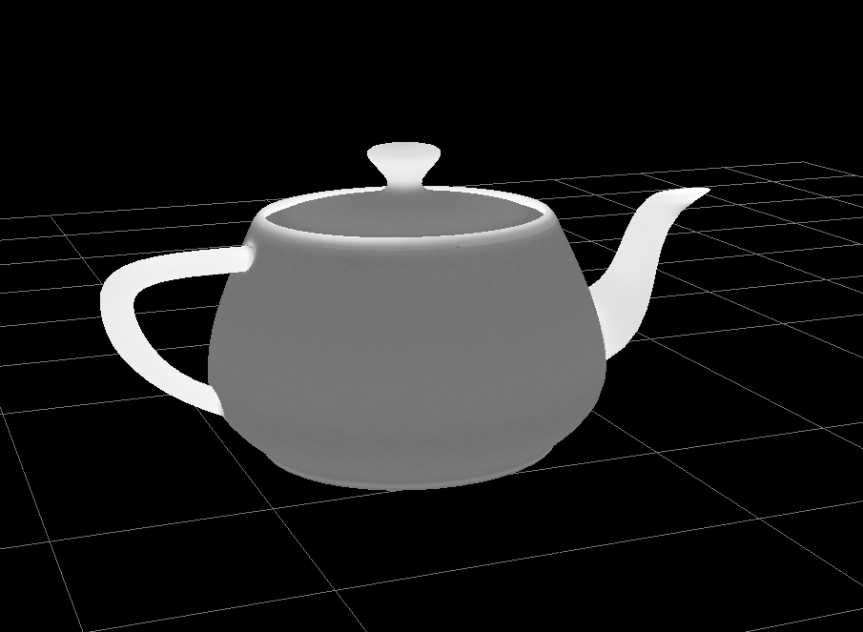

To illustrate how we generated the translucency/thickness maps for the objects in the talk, here’s another example with everyone’s favorite teapot:

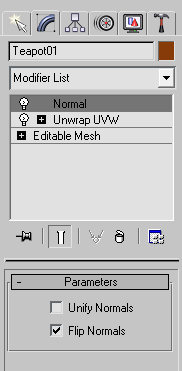

We used 3D Studio MAX. To generate inverted normals, we use the Normal modifier and select Flip normals:

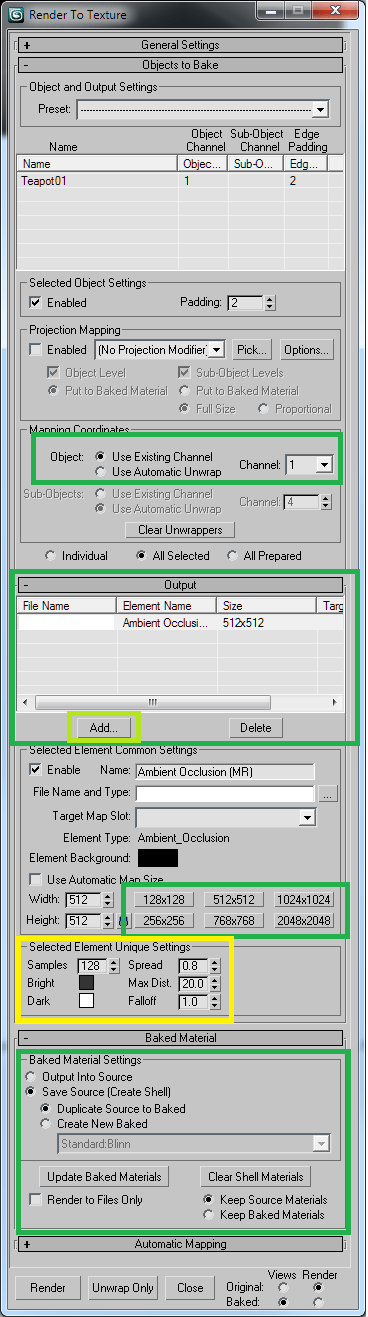

Make sure your mesh is properly UV-unwrapped. We then use the Render to Texture with Mental Ray to generate the ambient occlusion map for the normal-inverted object:

Notice how the Bright and Dark are inverted (compared to the default values). Here are some general rules/tips for generating a proper translucency/thickness map:

- Make sure to select Ambient Occlusion when adding the object as output, and the proper UV channel

- Set the Dark color to the maximum value you want for translucency (white = max, black = min)

- Set the Bright color to the minimum value you want for translucency (ie.: we use 50% gray, because we want minimal translucency everywhere on the object)

- Play with the values in the yellow rectangle to get the results you want

- Max Dist is the distance traveled by the rays. If you have a big object, make it bigger. The opposite for a small object.

- Increase the number of samples and the resolution once you have what you roughly want, for improved visuals (ie.: less noise)

That is all for now. Hope this helps! 🙂

hi

nice technique, i’v gpu pro 2, and the content is missing (like you said) any chance uploading it to your site, or sending it to my email?

thanks in advance…

oren k

Hi,

Very interresting technique ! As Oren I’ve got the gpu pro 2 book and it would be very great to have the missing samples since some points are still a bit dark to me.

Thanks by advance !

Charles G

Hey Oren and Charles,

Not sure why the sample didn’t end up in the book’s samples zip file. I’ll host the updated sample for the technique and will provide a link in the following days! 🙂