An attempt at more blogging, but this happened in the meantime, which is why you might find some of tweets below to be from a few months ago. 😉

A topic of discussion that comes up every now and then between programmers, technical artists and lighting artists is the concept of light masking, or Lighting Channels, and whether this concept is still valid. I’ve had this discussion many times before with developers out there (and somehow I’m sure you have too). Artists and programmers alike, opinions diverge. To get a new sample on the matter I decided to ask the twitter-verse:

Light Channels – Yay or Ney (Twitter Poll)

Yup, a division! Before we go over the discussion and the many answers people provided, which I will mix/interleave throughout this post to give perspective, let’s first cover some ground and make sure we all talk about the same thing.

Light(ing) Channels?

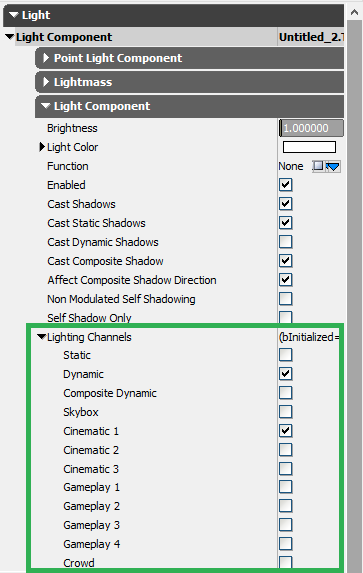

In layman’s terms, Lighting Channels (LC) is the functionality of masking lights on an per-object basis, or on a subset of objects that meet the masking criteria. Environment-only, character-only, and cinematic-only lights to name a few are examples that come to mind.

Lighting Channels in UDK – Point light affecting Dynamic objects tagged as Cinematic 1 [1]

This inclusion/exclusion concept allows lighting artists to have more fine-grained, manual control on light interactions. The image above describes a light affecting dynamic objects such as characters and others, but not the static environment. This case is especially common for cut-scenes, where lighting artists can clearly identify the key, fill and rim lights for each character, for each shot, to ensure that the art-directed lighting is manicured and behaves as expected.

3-point light setup example from a TV show

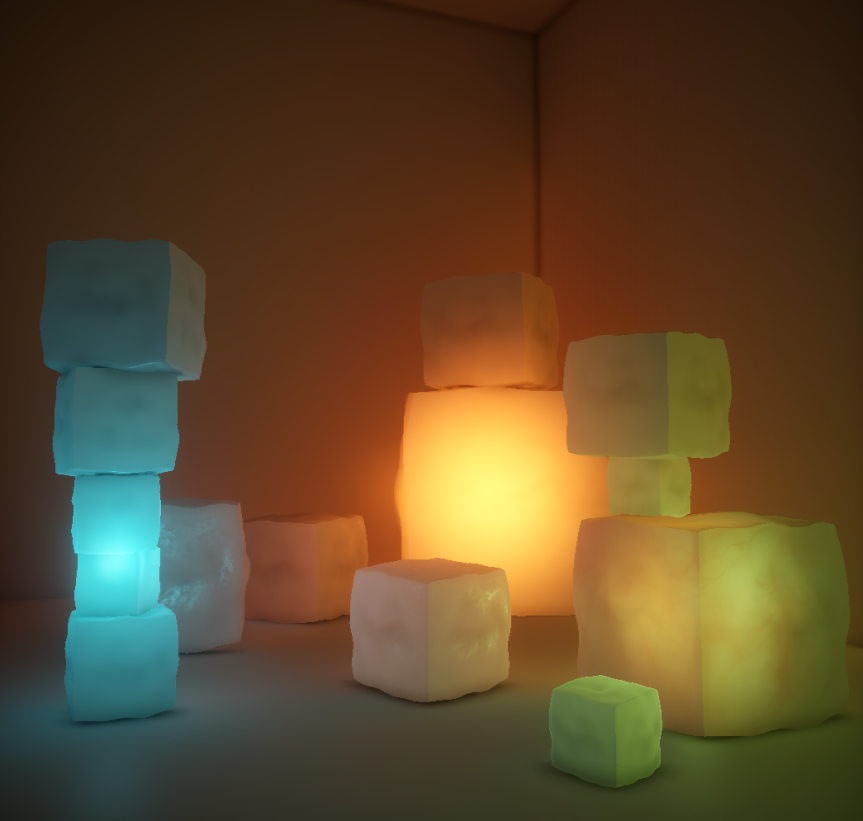

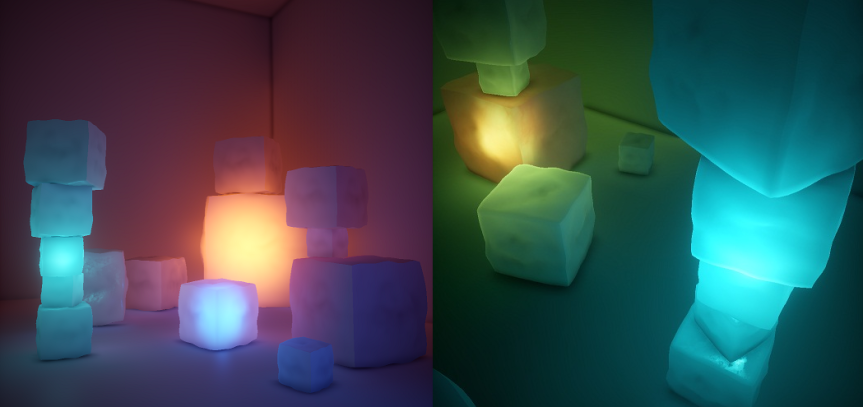

Lighting channels are not limited to characters and cut-scenes. Other classic examples come to mind, such as additional lights to manually enhance/fixup global illumination (ie.: faking/adding custom diffuse inter-reflection, or “bounce”), or additional lights for animated/hero objects.

Light Channels vs Light Merging

A bit of a side topic, though a concept often intertwined with light channels is light merging. In the context of forward lighting and how it was done back then, prior to tiled or clustered approaches [2] [3], iterating on all potentially affecting lights on a per object basis would greatly affect performance, especially on older hardware. To palliate this issue, dynamically lit objects were often lit by a subset of lights present in the scene: a select number of closest lights, or dynamically merging/coalescing lights [1] based on brightness/luminous flux, distance, or even using spherical harmonics [4] [5] to merge and extract the most relevant n-point (often 3). Lights could also be merged by taking their affecting channels into account.

What’s the connection with light channels you say? Well, more than just about being merged based on their channels, it turns out that while merging lights can provide a “good approximation” in certain scenarios of light interactions with dynamic objects without having to compute all interactions, you still end up with a discrepancy between lights that have been manually placed by artists and lights that have been merged for dynamic objects. To compensate, having additional “forced/non-merged” lights was often requested by lighting artists. Unfortunately this often led to too many of these lights, and we were back at square one with regards to the performance benefits of lighting with merged lights. Basically, a performance-affecting visual workaround to fix a performance work-around. It’s getting complicated…

A Workaround For Something Broken?

At this point you probably feel like something’s odd, not working, or simply that light channels are a hack for something broken in the way we light scenes. And you’d be right to think so. To put things in perspective, though we/programmers work really hard to constantly improve their representation and behavior, real-time lights in video games don’t generally behave the way they’re intuitively expected to. Of the many discrepancies, the following stand out:

- Shadows are commonly missing from many lights

- Only a few select (key) lights get shadows, not all of them.

- Shadowless lights shine through walls, and can hit unintended targets.

- Lights don’t (all) trigger inter-reflections / indirect illumination

- The lack of proper GI on all dynamic lights means only direct illumination.

- If your engine has real-time GI, most likely limited to a few lights.

The lack of good GI / inter-reflection / indirect illumination causes artists to want to manually add fixup secondary/fill lights to artificially simulate such effects, for both environments and characters, separately but sometimes simultaneously handling both cases. In practice this can work, but can easily become a mess of lights, unless you are very strict and handle these in separate layers. And even if you are organized, since most of these lights will not cast shadows, often causes things to now get hit with artificial lights that shouldn’t.

One common example is the Fridge Mouth Effect, the glowing of characters’ mouths from fill lights that are intended to light characters faces, or enhance the lighting on the environment, but aren’t shadowed and end up lighting up the inside of characters’ mouths. A visual artifact also featured on ears with translucency and no self-shadowing.

Fridge Mouth Effect – Non-shadow casting fill light coming from the right, during a randomly positioned cutscene in Fallout 4

Artists then want to isolate where these fixups happen. They want to work around the shadowing and GI limitations by controlling where the light ends up. This is light channels come in, but also other exotic modifications to physically-plausible light attenuation, such as custom falloff curves. The latter is up for another discussion. 😉

Missing Shadows Feels Like a Big Deal. Is this it?

If we had shadows on everything, feels like most of these issues would be non-issues. At the end of the day, it’s also about fighting priorities: artist total control vs practical AAA production realities:

Using flags to enforce rules to compensate for the lack of light/shadow behavior makes some sense if it weren’t the fact that 1) it doesn’t work well with deferred, 2) creates lighting discrepancies, 3) significantly increases scene management complexity for both art & code, and 4) breaks global illumination. In this day and age, with the sheer number of available dynamic lights, heavy usage of real-time & static shadow caching atlases, and more game teams working at solving real-time GI properly, feels like asking for light channels is a matter of convenience for an approach that used to work on previous generation titles, a consequence of getting used to the previous era lighting systems.

But I Really Want/Need To Make This Work With Deferred…

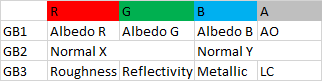

Simple G-Buffer, with Lighting Channel (LC)

Simple G-Buffer, with Lighting Channel (LC)

In the case of deferred, dedicating a full channel for storing a bitmask is probably not what you want to do. If you really want to make this work, instead you can store a subset of essential lighting channels with a few bits “borrowed” from other channels. Nonetheless, you will have to figure out how this interacts with your forward path, your particles, your more complex multi-layer environments/scenes, and if it’s worth the hassle.

So, What’s The Conclusion?

Taking a step back and looking at how we use our tools, figuring out what works and what doesn’t with technology and rethinking our workflows is part of being game developers, and applies to all professions in the field. Never being satisfied and always looking at improving how we achieve better is necessary to our success, individually but also as an industry. Such an industry-wide challenge happened not too long ago with the first generation of games that showcased PBR. I recall discussions with friends who pioneered this at various studios, and it wasn’t easy to get everyone on board, until the industry saw the true value in the long term investment, and embraced the change. Now, it’s hard to go back. 😉

In the case of lighting approaches & tools, while we still have some major challenges to tackle with shadows and global illumination, maybe it’s also time to take a leap of faith, and think about the long term value of moving away from some old concepts such as light channels. That being said, I invite you to have this discussion with the various rendering programmers, lighting artists and technical artists at your studio, and get perspective on your game needs and figure out what needs to happen to get everyone on board with a solution that works for everybody, keep the conversations going, and blog about what worked for your project.

It’s not necessarily about the conclusion, but rather about the discussion. Looking forward to hearing about it, and how your investment in shadows and unified lighting & GI solutions has paid of in the long run. 😉

Addendum – Another Perspective From The Movie Industry

Addendum – New York Times

I was asked by the New York Times if I could do a shorter version of this article, for a tech column:

In case you haven’t seen the original article. A few might find this amusing 😉

In case you haven’t seen the original article. A few might find this amusing 😉

Thanks

Thanks to everyone who provided feedback by responding to the Twitter poll (Bart Wronski, Steve Anichini, Sébastien Lagarde, Paul Greveson, Don Williamson, Stephen Hill, and Jordan Walker), and especially Jon Greenberg and Nicolas Lopez for the additional feedback and conversations. Was nice to have both artists and programmers express their views. Let’s keep the conversations going, super important for our industry!

References

[1] Unreal Developer Kit (UDK), “Light Environments”, Online.

[2] Harada, Takahiro, “Forward+: Bringing Deferred Lighting To The Next Level”, EUROGRAPHIC 2012. Online.

[3] Olsson, Ola. “Clustered Deferred and Forward Shading”, HPG 2012, Online.

[4] Greenberg, Jon. “Hitting 60Hz in Unreal Engine”, GDC 2009. Online.

[5] Greenberg, Jon. “Dynamic Lighting in Mortal Kombat vs DC Universe”, 2012, Online